Scaling back on LibGuides

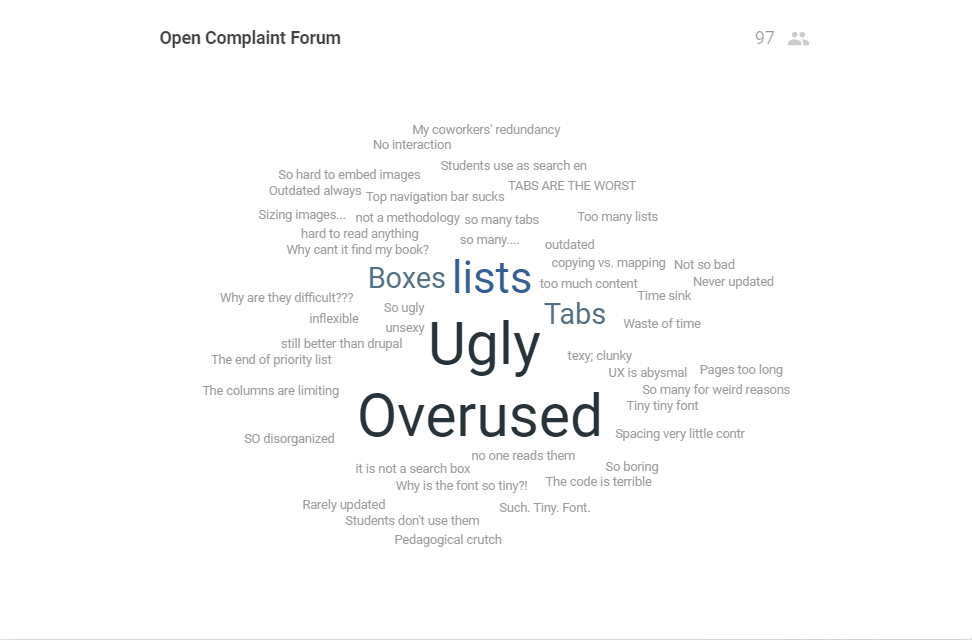

I have a love-hate relationship with LibGuides. So much so I presented about them at LOEX 2022 just a couple of weeks ago. In that presentation, my mentor and I expressed our frustrations and hopes for LibGuides in what we consider to be their optimal use. We started off by soliciting the audience’s grievances, and, boy, did they deliver.

It seems like everyone has a beef with making LibGuides. If they are so onerous to make…to keep up to date…to get students to use…Why do we continue to make them? Would anything make them better? I have so much to say on this topic, especially after conducting a literature review in preparation for our presentation, but, for now, I will reflect on the aspect that sticks with me the most: centering the learner.

Take a moment to think about how you decide to make a LibGuide. I suspect we fall into these categories:

- Subject LibGuides for liaison subjects/departments

- Course Guides at faculty request or our own volition.

- General guides about a topic of our choosing, whether subject or current event related.

These approaches do not inherently center the learner. They center the information and, honestly, us. We choose what materials to include in the guides based on subject expertise or familiarity with collections. Faculty let us know what they want to see in the guides sometimes with little room for feedback from us. Or we choose to make a guide based on a topic we find interesting or important. Where are our users in these approaches?

Centering the learner means understanding how our students look for and engage with information. Librarians’ mental models differ from our students’. We have a complete understanding of the research process and present information in a way that reflects that. We create guides that have a certain flow, that have an order that is logical to us: here are the books that might be useful, here are the databases. Students are focused on the product of research. They want access to the information that will get them there which means they are not reading through our guides like a book.

Our students are also search dominant thanks in part to Google. When they see a search box, they will use it both to “assert independence” from the navigation and as an “escape hatch” when they can’t seem to find what they need. They are not browsing our lists of resources on LibGuides just because. It is unsustainable for us to continue to make guides that we *think* might be useful but then never update them. And then hand them over to other librarians when we leave an institution.

To me it seems like the best approach to making LibGuides is to make them for specific courses and to embed them into the learning management system (LMS), like Canvas or Blackboard. When we go into that class for an instruction session, either teach directly from the guide or make explicit to students that the guide was made specifically for them. They are more likely to use the guide when it is already embedded into the LMS environment that they are in all the time.

This approach will result in fewer guides, which sounds really great to me. The guides we do make, however, will be more meaningful and useful to our students. I’m willing to try it out.

Register for Summer CRD Journal Club!

The summer series of Journal Club will meet on the third Thursday of the month from 2:00-3:00 pm. We will meet on June 16, July 21, and August 18. Please use this link to register and let us us know any topics you are interested in reading about this summer. We look forward discussing the latest scholarly literature with you!

The Evolution of Collecting Student Feedback

Our librarians have been collecting student feedback at the end of instruction sessions for well over a decade. In the 2007-2009 era, we were one of the few departments on our campus to use Clickers — does anyone remember those? — and they were good for getting anonymized pop-quiz assessment data and for injecting some novelty and humor into library sessions. With software and hardware changes and a campus switch to Mac OS, however, they eventually became more trouble than they were worth. Today the plastic shoebox of numbered remotes serves as my footrest under my desk.

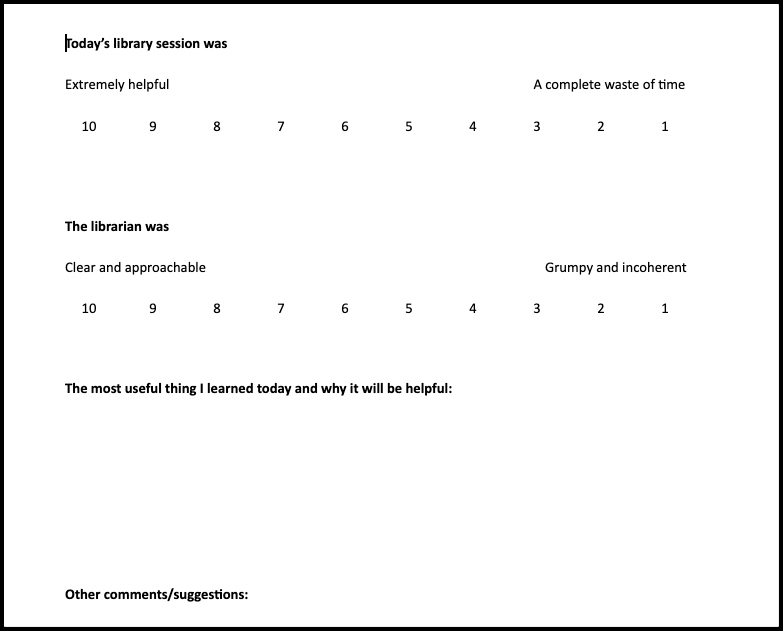

Around 2009, I began to hand out printed half-sheets of paper with Likert scales for students to rate their satisfaction with the session, plus a prompt for a “one-minute paper” about how they expected to use the knowledge they had gained. I would go through the papers after every class session and transcribe and code the results. It was time-consuming (and sometimes humbling) work, but it gave me a good sense of what the students had to say about our research instruction and how it could be improved. This method also gave us some basic quantitative data to present to the administration.

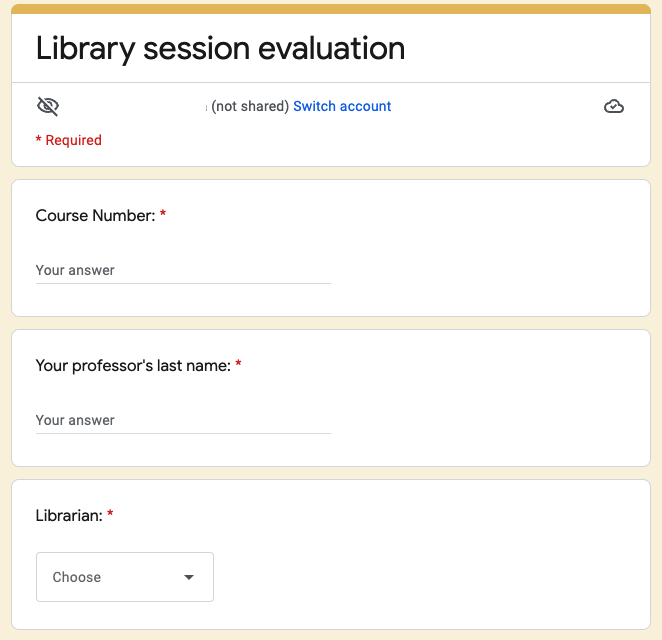

In 2014, we transitioned the same template to a Google Form survey. All of our students receive a Macbook (and, at the time, an iPad) and are expected to bring a mobile device to every class, so this was feasible. The electronic version retained the Likert scales for rating the librarian’s clarity and approachability, as well as another scale to rate the session’s overall helpfulness. It also included long-answer boxes for students to share “The most useful thing you learned today and why it will be helpful” as well as an option to share any general comments they might have.

We embedded the Google Form into a LibGuide page that could be reused on subject guides and also to have the “friendly URL” as another distribution option. From a data-management perspective, moving the survey to Forms made my life a lot easier. Responses were exported into a Google Sheets spreadsheet and I could run the average Likert scale scores in seconds. All of the librarians could access the spreadsheet, so each librarian instructor could access, copy, and manage the data from their classes if they liked while still allowing me to retain the comprehensive dataset.

With a few minor adjustments to the questions over the years, this is how we collected student class feedback from 2014 until this past academic year. As Fall 2021 loomed and I reflected that the Likert scale scores had remained consistent for seven years, I decided that continuing to collect those numbers was unnecessary. I eliminated the Likert scale questions. I reworded the first free-answer question, which asked students to identify what they believed would be the most useful takeaway from the session and why. I also changed the second long-answer question to give them an opportunity to leave a question, rather than the vague “any other comments?” prompt we had used previously.

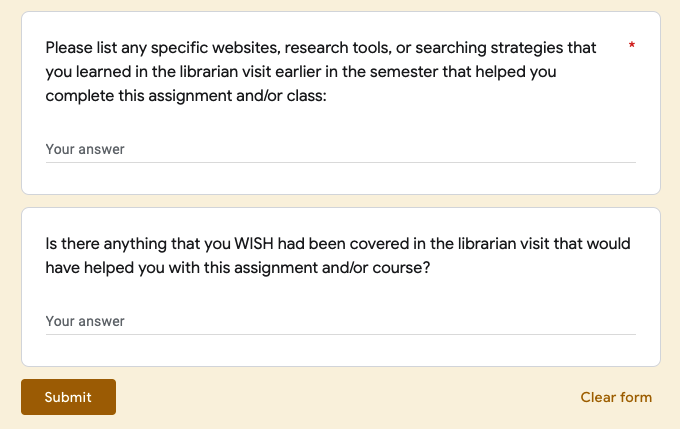

I also created a follow-up library session survey for distribution to students later on in the term, after they had a chance to put our teachings into practice. This survey asked the students to list any specific tools, sites, or strategies that they ended up using, and it also asked if there were any areas where they thought more guidance would have helped. The follow-up survey was sent to faculty either several weeks after the class visit or shortly after a major assignment due date, if we knew when a significant research project was due.

This was a major improvement, but we only used it for one semester! In early January 2022, my director asked me to take a look at ACRL’s Project Outcome. I had heard of it before but somehow had never looked into it; for some reason, I had the impression that it cost money and/or was better suited for large institutions. But I registered for an online presentation about it (“ACRL Project Outcome: Closing the Loop: Using Project Outcome to Assess and Improve a First-Year English Composition Information Literacy Program,” recording available here: https://youtu.be/ICDwuMRc3uY).

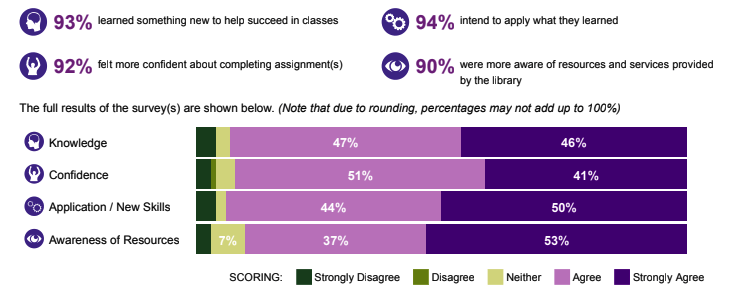

What struck me most as I learned about Project Outcome was that after all of my years of trial and error, the current iteration of my homegrown Google Form survey and the new follow-up survey were virtually identical to the surveys Project Outcome uses. When I realized that Project Outcome would allow me to instantly generate a visual representation of the students’ feedback, as well as compare our results to our Carnegie class peers across the nation, I was sold. Our provost favors quantitative data, and like most university administrators, she has many demands on her time. I’m excited to include this data comparison and visualization in our annual report, as I think it “tells our story” in a numerically-based and quick-to-comprehend way.

I am still learning my way around the Project Outcome dashboard and learning how best to administer and manage the surveys. Embedding the survey into a LibGuide page, as I had done with the Google Form, was a definite fail. Many students could not get the embedded survey to load. Fortunately, the direct URL to the survey is brief enough to post on the presentation screen, and I also made the old LibGuide “friendly URL” simply redirect to it. This seems to be working well. Participation in the follow-up survey is low and probably self-selects to more motivated students, but that is an expected limitation. Overall, I look forward to gathering more student feedback via Project Outcome and learning more about the ways that it allows us to analyze and present that data.

Registration to attend the 2022 College & Research Division Spring Workshop is now open! Academic libraries are constantly adapting and evolving to meet the changing needs of our diverse patrons and communities. The pandemic continues to expose fissures in higher education and library employees have been working diligently to address issues as they arise. Perhaps your library has needed to create new policies or implement new services; maybe your library is designing new physical spaces to accommodate patron needs. As the course curriculum evolves, so do library practices.

On Thursday, June 2, at the Madlyn L. Hanes Library at Penn State Harrisburg, we will explore how academic libraries have adapted and evolved in new and different ways to meet the needs of our campuses and communities. A full event program with session descriptions can be found at this link. The Spring Workshop will include a light breakfast and welcome remarks, morning presentations, boxed lunch, followed by afternoon presentations.

Investment: PaLA Member $50 | Non-member $75 | Student $25

Register HERE:

https://www.palibraries.org/event/2022CRDSpringConf

If you are interested in staying in a nearby hotel, we encourage you to review these options:

- Comfort Inn and Suites (1.3 miles away from the Penn State Harrisburg Campus)

- Fairfield Inn and Suites (2.2 miles away from the Penn State Harrisburg Campus)

Workshop registration will close on Friday, May 20, 2022.

This project is made possible by a grant from the Institute of Museum and Library Services as administered by the Pennsylvania Department of Education through the Office of Commonwealth Libraries, and the Commonwealth of Pennsylvania, Tom Wolf, Governor. Support is also provided by the College and Research Division of the Pennsylvania Library Association (https://crdpala.org/). Show your appreciation by becoming a member of PaLA! And if you are a member – thank you!

Zotero + LibWizard = Success!

Zotero is a citation manager that has been widely used across many college campuses by students to collect, store, and cite their research references, often at the suggestion of a librarian or professor. As a librarian at the University of Pittsburgh’s Johnstown Campus, I have witnessed the look of amazement on a student’s face once they realize how valuable this tool is, especially if they are working on a lengthy research paper or plan to go to grad school and continue their studies. Anyone who has taught Zotero (or any citation manager software) workshop should be well familiar with this reaction.

Unfortunately, these moments are few and far between lately. When the COVID-19 pandemic first began, I found myself, like many others in academic librarians, wondering how to best provide valuable information literacy instruction virtually. I also found myself thinking about ways to provide citation management workshops online.

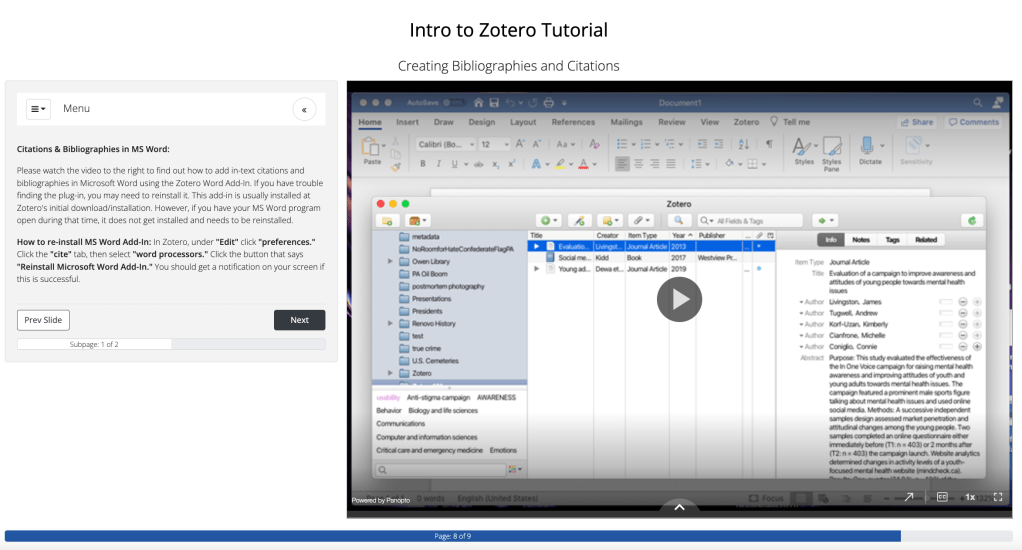

In the fall of 2020, I decided to try out a flipped learning activity for a communications research course that I had worked with on citation management tools the previous year. As someone who has taught Zotero workshops in person pre-pandemic, I knew how technology issues could derail an entire instruction session and I wanted to find a way to provide instruction through asynchronous means, but still be able to connect and engage with the students.

I proposed to the faculty member that I create a self-guided tutorial using Springshare’s LibWizard for her students to complete prior to the class session that I would attend with them on Zoom. This allowed for the students to learn the basics of installing and using Zotero before the class met synchronously online and could ask questions they had about the tutorial or learn more specific information that the tutorial didn’t cover. As this was a course outside of my regular liaison area, I had help from my colleague who supports the discipline, and he was able to show the students how to find resources that students could then import into their Zotero libraries.

This approach seems to have worked well, based on feedback from the faculty and students. When instruction resumed in-person, we tried this approach again with success. One of the students answered, “How to cite articles in a much easier way” to our post-class evaluation question about the most useful part of the session, so I know at least one student found some value in learning Zotero, which is a success in my book.

Creating a Self-Guided Zotero Tutorial with LibWizard

- Create your learning objectives (I kept mine broad to be able to use the tutorial for any student, independent of a class requirement).

- Create an outline of how to structure lesson (it helps if you already have this!).

- Organize your LibWizard slides using your outline.

- Welcome message/intro

- Intro about Zotero

- Lessons on how to use Zotero (where recorded videos will be inserted)

- Conclusion/Where to get help

- Test out tutorial (ask for volunteers or student assistants).

- Make changes as needed.

- Share the link and review reports for follow-up.

Pro-Tips

To help create the lessons, I recorded narrated videos that demonstrate the steps I use in an in-person class. I used Panopto, which is available at our institution, but since then I have found Active Presenter to be a more user-friendly software for video creation. In later tutorials, I have created videos with Active Presenter and then uploaded them to Panopto. Be sure to include captions and edit any mistakes. Chunking the demonstration videos into shorter lessons helps the user digest the content more easily, and hopefully helps them to retain it better as well. I also included places in the tutorial where the user can submit questions with their email address so I can follow up with them after completion.

If you would like to try the tutorial out yourself, please do so online (Intro to Zotero Tutorial). Please feel free to leave any constructive criticism so that I can make improvements over the summer. I know that there is a new Zotero 6.0 version out now that may offer new features, so I may need to update the lessons.