Connect & Communicate: History is a Group Project

Join CRD’s Connect & Communicate Series for a Webinar on

History is a Group Project: Cross-Campus Collaboration with University Archives

Tuesday, April 28, 2026 from 3:00 PM – 4:00 PM

This session highlights collaborative projects between university archives and two different college courses that integrated archival materials into the classroom. It explores how cross-campus partnerships can support experiential learning, introduce students to archival research, and position archives as active participants in teaching and learning. The session presenter will be Jessica Mando, University Archivist and Special Collections Librarian at Gannon University.

Register at the following link: https://us06web.zoom.us/meeting/register/cz52fkMlT1uDQiItvzrP5w

Upon submitting your registration, you will receive an email confirmation that includes details about connecting to the webinar. This is the only notification you will receive. If you do not receive the confirmation email, please contact Elliott Rose at elliott.c.rose@gmail.com.

For this program, you will need speakers or headphones to hear the presenter. Participants are encouraged to ask questions via the chatbox; moderators will monitor the chatbox and facilitate question and response at the end of the panel discussion.Please continue to share your ideas for programming topics, speakers, or formats with us!

If you or someone you know is doing something great in Pennsylvania’s academic libraries, tell us about it! The Connect & Communicate Series of online programming offered by the PaLA College & Research Division aims to help foster a community of academic librarians in Pennsylvania. Please contact Elliott Rose at elliott.c.rose@gmail.com with questions.

A tale of locating Pennsylvania Newspapers

The library profession is dealing with constant change, funding battles, and limited bandwidth in the workplace. Since the summer of 2024, these issues have been hitting home since I accepted an interim role at my institution. While I have enjoyed the new challenges, there have been occasional days or afternoons where I can truly focus on my primary role as a Digital Collections Management Librarian. Since those moments are limited, they have become somewhat precious because I am reminded of why I got into librarianship.

I love getting lost in research and going down a rabbit hole of information. Bring in a technical services role, most of the reference questions are related to Pennsylvania newspapers. As part of the role as Digital Collections Management Librarian, I serve as primary contact for newspaper digitization projects and assist in managing the Pennsylvania Newspaper Archive.

Earlier this year, I was presented with a unique situation that started with a thank you note and turned into weeks of research sessions to uncover the location and the current status of a Pennsylvania newspaper. A library user contacted my office to inquire about the status of the Public Spirit newspaper from Clearfield county and how the digitization process was coming along. Up to that point, I had not specifically heard about any requests regarding this title, and I started an investigation by checking the library’s holdings for the title. I found approximately 4 scattered issues that were in stable condition. While I would need to coordinate with colleagues from Special Collections and Cataloging and Metadata teams, this did not seem like an overly complicated request. After another conversation with the library user, I realized that we were not taking about the same set of newspapers. Their request is specifically referring to portfolio books containing 20 years of issues for the Public Spirit newspaper.

This requester has conducted an extensive amount of research on this newspaper title and others, so I continued the conversation to gain as much background information as possible. The ongoing communication provided me with a starting point. Here are the facts:

- An listing for the Public Spirit (Clearfield, PA) is not available on the Library of Congress Chronicling America database. I only found listings and digitized content for the following newspapers:

- My institution did not have a record of this newspaper in its collections beyond the 4 sporadic issues.

- The requestor informed me that there were previous discussions about donating the 20 years of newspaper portfolios to our Special Collections Library.

- The last known location of the newspaper portfolios was with a descendant of the original publisher, Matt Savage.

Beyond these basic facts, I understood the urgency regarding the completion of this digitization project because these portfolios are the most complete set of materials associated with this newspaper title. The original printing house for the newspaper suffered extensive damage from a fire, and many community members and local historians thought this newspaper was lost to time. However, the portfolios were discovered in the attic of a descendant of Matt Savage. The newspaper collection would be used to write a county history book, which was authored by members of the Clearfield County Historical Society.

Over the next few months, I would dig a little deeper during a research session to uncover the mystery. This would include reviewing more departmental records, reading county histories, genealogical searches for the Savage family, consulting with colleagues in the Special Collections Library, and reaching out to cultural heritage organizations in Clearfield county and the State Library of Pennsylvania. Additionally, I studied reports from a multi-phase initiative called the Pennsylvania Newspaper Project to see if the Public Spirit newspaper was included in the NEH grant. All of this work finally gave me the answers. Sadly, the descendant of Matt Savage that owned this newspaper collection had passed away several years ago. An employee at my librarian misinformed the requestor about the collection status, and the newspaper was never donated to Penn State. Fortunately, the owner did donate the newspaper collection to the Clearfield County Historical Society. While the material is certainly fragile, this organization is utilizing best practices for preserving and stabilizing the collection. It is such a reward to know that the newspaper has been located, and I am hopefully that conversations about a future digitization project can begin later this year.

If you are ever having trouble locating a Pennsylvania newspaper, please feel free to reach out to your colleagues. Several members of the Pennsylvania Library Association have extensive knowledge in local history and newspapers. Additionally, the Library of Congress Chronicling America website, the Pennsylvania Newspaper Archive hosted by Penn State University Libraries, and the State Library of Pennsylvania are always excellent resources.

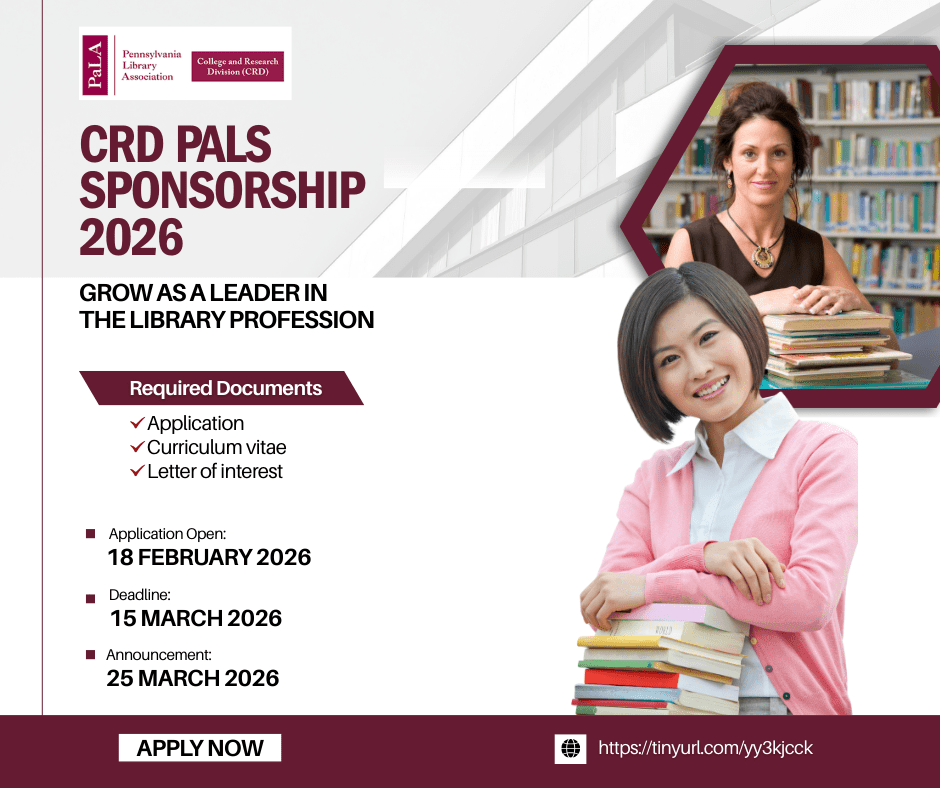

CRD PALS Sponsorship Opportunity

The College and Research Division is offering to sponsor a member to attend the PaLA Academy of Leadership Studies (PALS)! If you are interested in applying for the sponsorship, please check out this link here: https://tinyurl.com/yy3kjcck. The deadline is March 15th.

Survey on Librarians’ Values & Generative AI

Dear Colleagues,

I am conducting a research study about librarians’ individual values and decisions to use or refuse to use generative artificial intelligence. The aim is to help explain differences in librarian attitudes and intentions regarding generative artificial intelligence by examining how these may relate to individual values.

You are invited to participate if you currently work in an academic library and:

- hold an MLIS/MLS degree

- or your primary job responsibilities include providing reference, instruction, cataloging, collection development, or other information services.

Participation consists of completing an anonymous online survey that takes approximately 10-15 minutes. The survey includes questions about your personal values, your views on generative AI, and basic background information. Your responses will be completely anonymous. No identifying information will be collected, and results will be reported only in aggregate.

Survey Link: https://millersville.qualtrics.com/jfe/form/SV_1RJQCCtsDLHb79k

Your participation is entirely voluntary. You may skip any question or stop the survey at any time. There are no penalties for choosing not to participate.

Generative artificial intelligence is influencing many aspects of our professional responsibilities. Your participation will help create a deeper understanding of how our individual values inform our decisions about acceptance or refusal of generative artificial intelligence in our profession.

If you have any questions about the study, please feel free to contact:

Greg Szczyrbak, MSLIS

Millersville University

Email: greg.szczyrbak@millersville.edu

Join CRD’s Connect & Communicate Series for a Webinar on

Disability Accommodations in Academic Libraries

Tuesday, March 3, 2026, 3:00 P.M. – 4:00 P.M.

The process established under the Americans with Disabilities Act is designed to provide equal access to employment for disabled people. However, there remains persistent barriers to requesting and receiving accommodations throughout the library profession and academia. Grounded in federal documentation, LIS research, and personal experience, the presenter will explain the federally mandated process and how it typically works in academic libraries. The webinar will walk attendees through the process to request an accommodation, including how to know if you have an eligible disability, if accommodations could be right for you, what documentation is required, and what federally-mandated resources are available. This includes the limitations of “reasonable” accommodations and how to navigate the barriers or discrimination library workers may encounter. It will end with a question and answer session to address attendees’ issues or concerns related to the process generally or specifically at their institution.

Register at the following link: https://us06web.zoom.us/meeting/register/PskrkI5IQaidLR5RbtBWtA

Upon submitting your registration, you will receive an email confirmation that includes details about connecting to the webinar. This is the only notification you will receive. If you do not receive the confirmation email, please contact Elliott Rose at elliott.c.rose@gmail.com.

For this program, you will need speakers or headphones to hear the presenter. Participants are encouraged to ask questions via the chatbox; moderators will monitor the chatbox and facilitate question and response at the end of the panel discussion.Please continue to share your ideas for programming topics, speakers, or formats with us! If you or someone you know is doing something great in Pennsylvania’s academic libraries, tell us about it! The Connect & Communicate Series of online programming offered by the PaLA College & Research Division aims to help foster a community of academic librarians in Pennsylvania. Please contact Elliott Rose at elliott.c.rose@gmail.com with questions.